- Home

- AI Video Generator

- AI Video Generation

- AI Movie Generator: Turn Scripts into Cinematic Films

AI Movie Generator: Turn Scripts into Cinematic Films

Turn Scripts into Cinematic Films

Direct your own blockbuster. Transform written plots into stunning visual narratives with the world's most advanced AI movie generator. Ready for action? Describe your plot...

Trusted by creative teams at

Director's Monitor

Cinematic Control Deck (Cost: 60/Scene)

Lock characters

Viewport Idle

Introduction

Filmmaking has historically been the most exclusive, expensive, and logistically complex art form in existence. It is the "Command and Conquer" of the creative world. A single minute of a Hollywood blockbuster is the result of thousands of hours of labor, millions of dollars in equipment, and armies of specialists—from lighting gaffers and dolly grips to location scouts and craft services. This high barrier to entry has silenced countless storytellers who possess the vision of a Kubrick or a Spielberg but lack the budget of a studio executive.

FlowVideo AI's AI Movie Generator systematically dismantles these walls. We are entering the age of "Synthetic Cinema," a new era where a single individual can occupy the roles of writer, director, cinematographer, and editor simultaneously. Our tool allows you to convert a simple text prompt or a complex screenplay directly into High-Definition video sequences. You define the genre, the lighting, the actors, the wardrobe, and the camera movements; the AI executes your vision with pixel-perfect precision, treating pixels as digital clay.

Whether you are an aspiring director creating a concept trailer to pitch to Netflix, a musician looking for a visual accompaniment to your track, or a marketer needing a broadcast-quality commercial without the broadcast budget, the specialized ai movie engine understands the language of cinema. It doesn't just generate random moving images; it understands narrative flow, scene composition, emotional pacing, and visual continuity. This is your personal studio backlot, accessible from your browser, 24/7, with zero rental fees.

Why Use an AI Movie Generator? (Deep Dive)

Unbounded Creative Freedom

The Technology Behind Synthetic Cinema

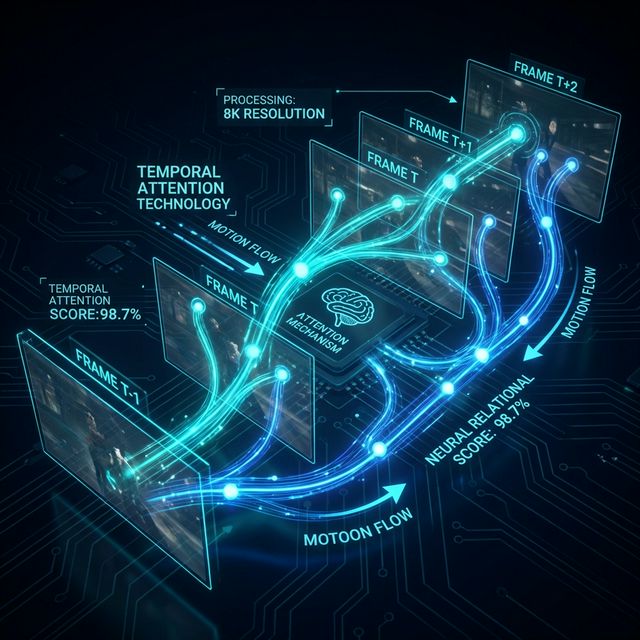

Temporal Attention Modules (The Memory)

Standard image generators create a single snapshot in time. Video models must create a sequence where time flows logically. We use "Temporal Attention Modules" in our neural network (similar to transformer architectures used in SORA). This allows the AI to "remember" what happened in frame 1 and predict what should happen in frame 24. If a car is driving left-to-right, the module ensures it doesn't suddenly teleport, change color, or drive backwards. It maintains the inertia, momentum, and lighting consistency across the timeline.

Space-Time Patches (Latent Diffusion)

The video is not generated pixel by pixel. It is generated as "Space-Time Patches." The AI treats video as a 3D volume (Height, Width, Time). It de-noises the entire clip simultaneously. This is why when a bird flies across the screen, its wings flap coherently. The model understands the 3D geometry of the bird and how it moves through the "Time" dimension.

Virtual Cinematography (Camera Control)

Our model has been labeled and trained on millions of shots using cinematic terminology. It understands what a "Dolly Zoom," "Pan Left," "Dutch Angle," or "Close-Up" is. When you specify "Drone shot establishing a city," the AI simulates the physics of a drone camera—the smooth acceleration, the high angle, the wide field of view. This virtual camera control gives your ai movie a professional, directed feel. The AI acts as your Director of Photography (DP), framing the shot according to the Rule of Thirds or the Golden Ratio automatically.

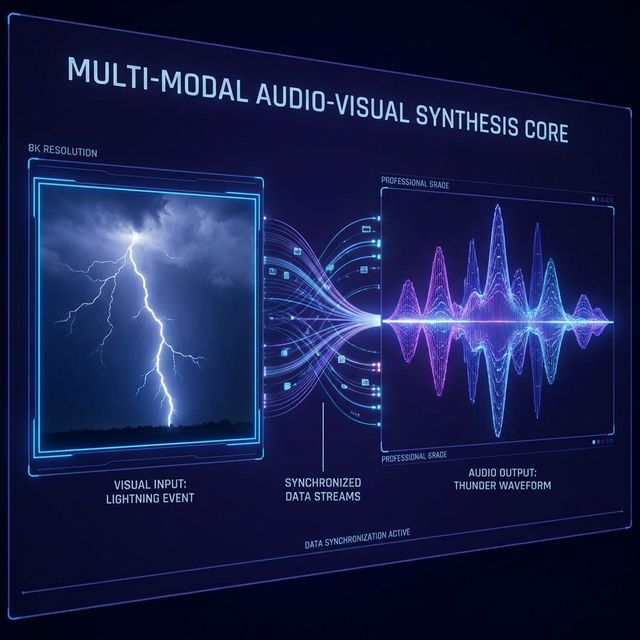

Multi-Modal Audio-Visual Synthesis

A movie isn't just pictures; it is 50% sound. Our "Co-Production" engine generates a synchronized audio track. It analyzes the video pixels: if it sees a storm, it generates realistic thunder and rain sounds; if it sees a car engine revving, it generates the roar of a V8. If it sees two people talking, it can generate lip-synced dialogue (using our TTS engine). This multi-modal generation saves hours of sound design work (Foley) in post-production.

Step-by-Step Guide: How to Make Your AI Movie

Develop Your Script/Plot (Pre-Production)

Start in the "Writer's Room" section. You can input your own script or use our AI Co-Pilot to brainstorm. Microscope Detail: Good AI films start with structured prompts. Break your story down into "Beats" or individual shots. Bad Prompt: "A movie about a spy." (Too vague). Good Prompt: "Scene 1: Interior. Casino. Night. Medium shot of a spy in a tuxedo walking through the crowd. The lighting is moody and golden with bokeh background. He looks nervous. 8k resolution, cinematic lighting." Pro Tip: Use the "Script-to-Video" mode if you have a full screenplay with standard formatting (Sluglines, Action, Dialogue). The AI will parse the scene headings automatically.

Define the Visual Style (The Look)

Before shooting, you pick your film stock and lens package. Microscope Detail: Choose a preset "Aesthetic Model": Film Noir: High contrast, black and white, deep shadows, smoke effects. Blockbuster: High saturation, teal and orange color grading, lens flares, high dynamic range (IMAX look). Vintage 16mm: Grainy, soft focus, retro coloring, film burns, chromatic aberration. Animation: Pixar-style 3D or Japanese Anime style (if making an animated film). Consistency: This "Global Style" setting ensures that all your generated clips feel like they belong to the same movie universe, preventing jarring visual shifts between shots.

Scene Generation (The Shoot)

Generate your clips one by one. This is "production." Microscope Detail: Enter your prompt for Shot 1. Click "Action." Variations: The AI generates 4 variations (Takes). Watch them all. Maybe in Take 1 the actor looks too angry, but in Take 3 the lighting is perfect. Pick the best one. Iterate: If none are perfect, tweak the prompt (e.g., add "more fog" or "closer camera") and re-roll. This iterative process allows you to curate the best performances, just like a director on set asking for "one more take." Duration: Standard clips are 4 seconds. You can extend them to 8 or 12 seconds if the shot requires a long, slow movement.

Editing and Assembly (Post-Production)

Once you have your "Dailies" (the raw generated clips), go to the Workspace Editor. Microscope Detail: Drag your clips onto the timeline sequence. Trimming: Cut the awkward beginning or end of a clip where the AI movement might settle. Start the shot in the action. Transitions: Add cross-dissolves, fade-to-black, or hard cuts between scenes to control the pacing. Use "Match Cuts" (cutting between similar shapes) for artistic effect. Sound Mixing: Layer in the generated dialogue and music. The editor allows for multi-track audio mixing, so you can have background music swell during dramatic moments and dip when characters are speaking. Color Grading: Apply a final "LUT" (Lookup Table) to glue all the shots together visually.

Traditional Filmmaking vs. Synthetic Cinema

| Factor | Traditional Filmmaking | Synthetic Cinema (AI) |

|---|---|---|

| Budget | High ($1,000 - $100M) | Low ($0 - $50) |

| Crew Size | 10 - 100+ Humans | 1 Director (You) |

| Location | Weather/Permit Dependent | Infinite Virtual Locations |

| Post-Production | Editing, Color Grading, VFX | Integrated/Prompt-based |

| Speed | Months/Years | Days/Hours |

Industry Use Cases

Advertising Pre-Visualization ("Rip-o-matics")

Ad agencies use ai movie tools to create "Rip-o-matics"—mockup commercials used to pitch ideas to clients. Instead of using generic stock footage that doesn't quite fit, they generate custom footage that perfectly illustrates the concept, helping them win million-dollar contracts.

Music Videos

Musicians are using AI to generate full music videos. The surreal, dreamlike quality of AI video often fits perfectly with electronic, techno, or alternative music. It allows independent artists to release a visual for every song on an album, not just the singles, increasing their YouTube footprint.

Real Estate and Architecture

Architects use cinematic fly-throughs to showcase buildings that haven't been built yet. They can generate a movie showing "A day in the life" of a resident in a new complex, complete with simulated people and traffic, selling the lifestyle before the ground is broken.

What Users Are Saying

The next generation of Spielbergs are already here.

Carlos V.

Indie Filmmaker

“Created my entire concept trailer without a crew. Investors thought I had a $50K budget.”

Emma S.

Music Artist, Spotify

“Every song on my album now has a unique music video. The surreal AI style fits my aesthetic perfectly.”

Jason L.

Ad Agency Creative Director

“Our pitch presentations now include fully rendered commercial mockups. Win rate up 40%.”

Troubleshooting Common Movie Glitches

Characters look inconsistent between shots.

Use the "Face Swap" tool on the generated clips. Upload a photo of your intended actor, and the AI will paste that face onto the generated body in every shot, ensuring identity persistence.

Text on signs is garbled.

AI struggles with text inside video. Use the "In-painting" tool (Pro) to mask out the bad text and replace it, or avoid shots with written signs.

Movement looks unnatural or "floaty".

This happens when the AI doesn't understand the gravity of the scene. Add "heavy weight," "realistic physics," or "grounded" to your prompt.

The 180-Degree Rule.

If two characters are talking, make sure to prompt the camera angle correctly ("Over the shoulder shot from left") so they don't look like they flipped positions.

Frequently Asked Questions about AI Movie Generator

How an AI Movie Generator Transforms Storytelling for Filmmakers in 2026

From Script Page to Screen: The New Filmmaking Pipeline

Producing a short film used to require location scouting, crew coordination, and equipment rentals that could stretch budgets past the breaking point. An ai movie generator changes that equation entirely. With FlowVideo, you start by writing or pasting a screenplay into the platform, then the system breaks your narrative into individual shots. Each shot receives its own text prompt describing the framing, lighting, mood, and action. The result is a sequence of HD video clips that follow your story from the opening frame to the closing credits. Because every clip is generated from your specific instructions, you maintain creative authority over casting choices, color palettes, and camera angles without hiring a single grip or gaffer. Independent creators who previously shelved ambitious projects due to cost constraints are now completing concept trailers, pitch reels, and festival submissions from a single workstation.

Character Persistence and Visual Continuity Across Scenes

One of the biggest technical hurdles in generated video has been keeping characters consistent from one shot to the next. FlowVideo addresses this with Character Persistence technology. Before you begin generating scenes, you create a character sheet that locks in facial features, wardrobe details, and body proportions. The ai movie engine references this sheet every time it renders a new clip, so your protagonist in scene one looks identical in scene forty. This continuity is what separates a random collection of clips from a coherent narrative film. Audiences need to recognize the same hero, the same villain, and the same world across every cut. Without that consistency, suspension of disbelief collapses. The character sheet approach also speeds up production because you stop wasting takes on identity drift and spend more time refining performances and compositions.

Virtual Cinematography: Controlling the Camera Without Physical Gear

Professional filmmaking relies heavily on deliberate camera movement. A slow dolly push conveys intimacy. A high-angle drone shot establishes geography. A Dutch angle signals psychological tension. FlowVideo's virtual cinematography system understands these conventions because it was trained on millions of labeled shots using standard film terminology. When you write dolly zoom or pan left in your prompt, the system simulates the physics of that movement, including acceleration curves and focal length shifts. Pro workspace users can fine-tune pan, tilt, and zoom values with sliders for frame-level precision. This level of camera control means your ai movie carries the same directed, intentional quality that audiences associate with theatrical releases rather than looking like a string of static images stitched together.

Sound Design and Multi-Modal Audio Synthesis

A film without sound is only half finished. FlowVideo generates synchronized audio tracks alongside your video clips by analyzing what appears on screen. If the scene shows rain hitting pavement, the engine produces ambient rainfall and distant thunder. If a character speaks, the text-to-speech module creates lip-synced dialogue that matches the on-screen mouth movements. You can layer these generated tracks with background music and specific foley effects using the built-in multi-track audio mixer. This integrated approach to sound design eliminates the need to source stock audio libraries or record custom effects in a studio. For creators working on tight deadlines, having picture and sound generated in a single pass reduces post-production time from weeks to hours.

Practical Workflows: Advertising, Music Videos, and Architecture

The ai movie generator serves different industries in distinct ways. Advertising agencies build rip-o-matics, which are rough mockup commercials used to pitch concepts to clients before committing to a full production budget. Musicians produce surreal, visually striking music videos for every track on an album instead of only the lead single, expanding their presence across streaming platforms. Architects generate cinematic fly-throughs of buildings that exist only as blueprints, letting prospective buyers experience a space before construction begins. Educators create historical reenactment sequences for classroom use. Each of these workflows follows the same core process: write the scene description, select a visual style preset, generate clips, and assemble them in the timeline editor. The difference lies in the prompts you write and the aesthetic models you choose, not in the tool itself.

Getting Started: From First Prompt to Final Export

Begin by opening the Writer Room and structuring your story into numbered beats, where each beat corresponds to one shot. Write detailed prompts that specify interior or exterior setting, time of day, subject action, and camera movement. Select a global style preset such as Film Noir, Blockbuster, or Vintage 16mm to ensure visual consistency across all generated clips. Click generate and review the four variations the system produces for each shot, picking the take with the best lighting and composition. Drag your selected clips onto the timeline, trim any settling frames at the head or tail, add transitions, and mix your audio layers. Apply a final color grade LUT to unify the footage, then export at up to 4K resolution with the Pro upscaler. The entire process from script to finished short film can happen in a single afternoon, making FlowVideo a practical production tool rather than a novelty experiment.