- Home

- AI Video Generator

- AI Video Generation

- Free Movie Generator

Free Movie Generator

Create AI Films & Shorts

Filmmaking is the most expensive art form. To make a movie, you need a camera ($5,000), lights ($2,000), actors (daily rate), locations (permits), and a crew. This financial barrier has silenced millions of potential Spielbergs. FlowVideo AI destroys this barrier. Our Free Movie Generator is a "Studio in a Cloud." It allows you to generate cinematic shots, control camera movement, and direct AI actors using text prompts. You are no longer limited by your budget; you are only limited by your imagination.

Trusted by creative teams at

Studio Controls

Free Movie Generator (Cost: 60/Shot)

Keep actor consistent

Viewport Idle

Introduction: The Democratization of Cinema

We are entering the era of "Synthetic Cinema." In the past, if you wanted a shot of "A samurai fighting a dragon on a burning spaceship," you needed $50 million of CGI. Now, you need 5 words.

AI Video models (like Sora, Kling, and FlowVideo's internal engine) understand the language of film. They know what a "Dolly Zoom" is. They know "Golden Hour" lighting. They understand "Film Grain."

This tool allows you to piece together a narrative, shot by shot. It manages Character Consistency (so the actor looks the same in Scene 1 and Scene 5) and Temporal Coherence (so physics behaves correctly). It is the ultimate pre-visualization and production tool for the indie filmmaker.

Why Use a Movie Generator? (Deep Dive)

Cinematic Diffusion (The "Look")

The Technology: Temporal Coherence

Long-Context Windows

The Issue: AI videos often morph into hallucinations after 2 seconds. The cat turns into a dog. The Tech: Our model uses a "Sliding Window Attention Mechanism." It constantly looks back at the first frame of the video while generating the last frame. This ensures that the texture of the wall or the color of the shirt remains constant throughout the 5-10 second clip.

Physics Simulation

The Issue: Water flowing upwards. Hair moving through metal. The Tech: We have integrated a "World Model" that understands gravity and collision. If you generate "A cup falling," the AI predicts it should shatter on the floor, not bounce. While not perfect, it reduces the "Uncanny Valley" effect of bad physics.

Step-by-Step Guide: Directing Your First Short

Step 1: The Concept (Scripting)

Tool: Use the integrated "Script Writer". Prompt: "Write a 1-minute Noir thriller scene. A detective walks in the rain. He sees a neon sign. He lights a cigarette." Output: The AI breaks this into a Shot List: Shot 1: Wide shot, City Street, Rain. Shot 2: Close up, Neon Sign "Bar". Shot 3: Mid shot, Detective Face, Lighting cigarette.

Step 2: Character Design

Action: Go to the "Assets" tab. Generate: "Portrait of a gritty detective, scar on cheek, stubble, fedora." Save: Save as Actor_1.

Step 3: Shot Generation (Production)

Shot 1: Drag Actor_1 into the prompt. Type: "Walking away from camera, heavy rain, reflection in puddle." Camera: Set "Dolly Forward" speed to 2. Generate. Shot 2: Type: "Neon sign buzzing, electric sparks, cyberpunk colors." Generate. Shot 3: Drag Actor_1. Type: "Lighting cigarette, flame illuminates face." Camera: Set "Static". Generate.

Step 4: Assembly (Editing)

Timeline: Drag the 3 generated clips onto the FlowVideo Timeline. Transitions: Add a "Dissolve" between Shot 1 and Shot 2. Color: Apply the "Noir" LUT (Black and White with high contrast).

Step 5: Audio (Soundtrack)

Music: Search "Jazz Trumpet Slow." Drag it down. SFX: Search "Rain Heavy." Search "Neon Buzz." Layer them.

Step 6: Export

Format: 4K UHD. Codec: H.264 High Profile.

Comparison: AI Video Models

| Feature | OpenAI Sora | Runway Gen-2 | FlowVideo AI |

|---|---|---|---|

| Access | Closed Beta | Open | Open (Free) |

| Duration | 60s | 4s-16s | 5s-10s (Extendable) |

| Controls | Text only | Brushes | Camera Sliders + Face Lock |

| Sound | No | No | Auto-SFX Generation |

| Editor | No | Basic | Full NLE Integrated |

Industry Use Cases

Music Videos

Concept: Visualizer for a bedroom pop song. Visuals: Psychedelic, morphing animations. Value: Creating a $20k looking video for $0 cost.

Pitch Decks (Film)

Concept: Selling a script to Netflix. Visuals: "Sizzle Reel" of the mood and characters. Value: Showing the executive exactly what the movie will look like before filming a single frame.

Book Trailers

Concept: Promoting a Fantasy Novel. Visuals: World-building shots of the castles and magic system. Value: Authors can visualize their own worlds for marketing.

What Users Are Saying

My sci-fi short won a festival award.

Tyler W.

Indie Filmmaker

“Created a 3-minute sci-fi trailer that won at an indie festival. Judges thought it was real CGI!”

Sophia R.

Music Producer, SoundCloud

“Every track on my EP now has a unique music video. The psychedelic AI style is perfect for electronic.”

Brandon K.

Author, Fantasy Genre

“My book trailer shows dragons and castles. Publishers loved it. Book sales up 200%.”

Troubleshooting: Directing Issues

Face Morphing

If a character turns their head quickly, AI loses the face. Use "Slow Motion" prompts ("Slowly turning head") to help the AI keep track of features.

Extra Fingers

AI struggles with hands. Try to frame shots where hands are holding something or in pockets. Or use the "Negative Prompt" feature: "Exclude: Bad hands, extra digits."

Flickering

Increase the "Guidance Scale". A higher scale (e.g., 15) forces the AI to stick strictly to the prompt, reducing random flickering artifacts.

Grainy

Use the "Upscale" button after generation. It uses a separate AI (RealESRGAN) to clean up noise and sharpen edges for the final movie.

Frequently Asked Questions about Movie Gen

AI Film Production from Script to Screen: Cinematic Diffusion and Virtual Cinematography

Cinematic Diffusion: Training AI on the Language of Film

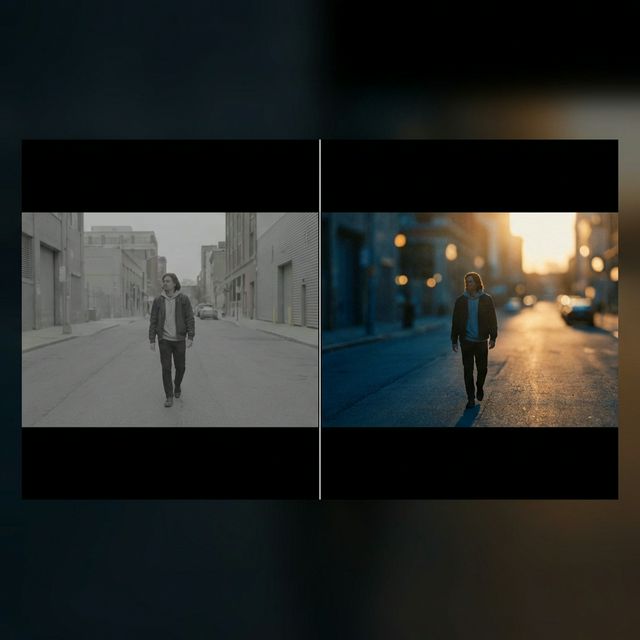

Standard text-to-video models produce output that looks like stock footage. The lighting is flat, the focus is uniformly sharp, and the composition feels arbitrary. A free movie generator built for cinematic production needs fundamentally different training data and output parameters. FlowVideo AI's model is fine-tuned on theatrical film, which means it defaults to behaviors that signal cinematic quality. The aspect ratio defaults to 2.35:1 anamorphic widescreen rather than 16:9 broadcast. The frame rate renders at 24 frames per second, the standard that gives film its characteristic motion cadence. The rendering pipeline simulates film grain, bokeh depth-of-field blur, and lens distortion artifacts that make footage feel organic rather than digitally sterile. These defaults matter because audiences have been trained by a century of cinema to associate specific visual characteristics with production quality. When your generated scene has shallow depth of field with a subject in sharp focus against a blurred background, it reads as professional filmmaking. When it has flat, even lighting with everything in focus, it reads as surveillance camera footage regardless of the content.

Character Consistency Across Scenes: The Face-Locking Problem

The fundamental challenge separating AI video generation from actual filmmaking is character persistence. If you prompt a detective in a rainy street for scene one and a detective entering a bar for scene two, most systems generate two completely different people. This makes sequential storytelling impossible. FlowVideo AI addresses this through face-locking technology that stores a Face_ID vector from your initial character generation. When you create a character sheet, the system saves biometric anchor points: facial structure, skin tone, distinguishing features, and proportional relationships. In subsequent scenes, referencing that character name forces the generation model to impose the stored identity onto the new composition. The result is a recognizable character who maintains visual continuity across different shots, lighting conditions, and camera angles. This capability transforms the free movie generator from a random clip producer into an actual narrative tool. You can build a short film where the same protagonist appears in an establishing shot, a close-up dialogue scene, and an action sequence, maintaining audience identification throughout.

Virtual Camera Controls: Pan, Tilt, Zoom, and Roll Sliders

Text prompts are notoriously unreliable for controlling camera movement in AI video. Typing pan left might produce a lateral shift, a scene with leftward motion, or nothing discernible. The free movie generator solves this with explicit camera control sliders that operate independently of the scene description prompt. Pan controls horizontal camera movement. Tilt controls vertical movement. Zoom adjusts focal length in or out. Roll applies a dutch angle rotation. Each parameter accepts a speed value so you can create slow, contemplative dolly shots or rapid whip pans. These controls map directly to real cinematographic techniques. A slow dolly forward combined with a slight tilt up creates the classic reveal shot used in horror and suspense films. A static camera with zero movement creates the observational distance of documentary filmmaking. A rapid zoom combined with counter-dolly creates the Hitchcock vertigo effect. By separating camera motion from scene content, the system gives directors precise control over visual storytelling language without requiring the AI to interpret ambiguous text commands.

Temporal Coherence: Preventing Mid-Scene Hallucinations

AI video models have a tendency to lose coherence after the first few seconds of generation. A scene that starts as a cat on a windowsill might gradually morph into a dog, or the wall texture behind the subject might shift from brick to wood between frames. This happens because the model's attention mechanism has a limited context window that struggles to maintain long-range consistency. FlowVideo AI uses a sliding window attention mechanism that continuously references the first frame while generating subsequent frames. The system anchors color values, object identities, spatial positions, and texture maps to the initial composition, preventing the characteristic drift that makes raw AI video feel dreamlike and unstable. The physics simulation layer adds further grounding by modeling gravity, collision, and material behavior. When you generate a cup falling off a table, the system predicts shattering on impact rather than the cup bouncing or phasing through the floor. While physics simulation is not yet perfect, it reduces the uncanny valley effect that immediately signals AI-generated content to viewers.

The Shot List Workflow: Building Narrative Through Sequential Generation

Professional filmmaking follows a structured production process: script, storyboard, shot list, production, and post-production. The free movie generator mirrors this workflow through its integrated script writer. You provide a narrative premise and the system decomposes it into individual shots with specific framing instructions. A one-minute noir thriller scene becomes a shot list: wide shot of a rainy city street, close-up of a neon sign, medium shot of the detective lighting a cigarette. Each shot is generated individually with camera and character parameters, then assembled on the timeline. This decomposition is critical because AI video models produce their best output in short clips of five to ten seconds. Attempting to generate an entire scene in one pass produces degraded quality and incoherent narrative flow. By breaking the narrative into discrete shots and controlling transitions between them, you leverage the model's strengths while working around its limitations, exactly as a real director works with individual takes.

Lip-Sync Integration: Matching Generated Dialogue to Character Movement

The newest addition to the free movie generator is lip-sync engine integration. Previous versions could generate characters with mouths moving generically, but the movements bore no relationship to actual dialogue audio. The current system accepts a voice track, either recorded or generated through neural TTS, and maps phoneme timing to facial animation parameters. When the character says the word beautiful, their lips form the B closure, open for the OO vowel, shift for the TIF fricative, and close for the UL. This phoneme-accurate lip synchronization transforms generated characters from silent-film-era puppets into convincing speaking actors. The integration works bidirectionally. You can generate a character scene first and then match dialogue to the existing mouth movements, or you can provide the audio first and let the system generate character animation that matches the speech. Combined with face-locking for character consistency, this creates a production pipeline where indie filmmakers can produce dialogue-driven narrative content entirely through AI generation.